The Pentagon just signed seven contracts with competitors of Anthropic. The press is calling it an "acceleration" of AI integration. They are wrong. This isn't an acceleration; it’s a frantic attempt to buy a seat at a table where the rules of engagement haven't even been written.

By diversifying across seven different vendors, the Department of Defense (DoD) isn't building a resilient "mosaic of intelligence." It’s building a digital Tower of Babel. In the high-stakes world of national security, fragmentation is just another word for failure.

The Myth of LLM Interoperability

The lazy consensus suggests that more models equal more options. If Anthropic’s Claude 3.5 Sonnet has a specific bias or safety guardrail that hinders a mission, the DoD can just swap it for a model from one of these seven new partners.

That logic is fundamentally flawed. Large Language Models (LLMs) are not interchangeable batteries. They are complex, temperamental black boxes. Each has a unique "latent space" and distinct ways of responding to prompts.

When you build a defense system on top of an AI, you are "fine-tuning" and "prompt engineering" for a specific architecture. If you switch from Model A to Model B, your entire stack breaks. The "emergent behaviors" change. The reliability vanishes. By spreading money across seven different competitors, the Pentagon is ensuring that no single system will ever be deeply integrated enough to be useful in a theater of war.

Buying the Hype Instead of the Hardware

We are seeing a repeat of the "Cloud Wars" of the last decade. The Pentagon spent years and billions on JEDI, then JWCC, trying to buy "the cloud." They forgot that cloud is just someone else’s computer.

Now they are trying to buy "the AI." But AI isn't a commodity you can stock in a warehouse. It is a live service that requires massive, specialized compute power—specifically, NVIDIA H100s and B200s.

The seven companies the Pentagon just signed with are all fighting for the same crumbs of compute. If the DoD wants actual AI dominance, they shouldn't be signing contracts with software startups that are beholden to Big Tech’s infrastructure. They should be building their own sovereign compute clusters.

Instead, we are watching a massive transfer of taxpayer wealth to VC-backed entities that are essentially "API wrappers" for larger tech giants. It is a strategy built on branding, not ballistics.

The Security Theater of Competition

The DoD claims this move prevents "vendor lock-in." This is a classic bureaucratic distraction.

In military hardware, lock-in is often a feature, not a bug. You want your fighter jet parts to be standardized. You want your encryption protocols to be uniform. Diversity in weapon systems creates a logistical nightmare.

Applying the "competition is always good" mantra to AI ignores the reality of data poisoning and supply chain security. Each new vendor represents a new attack vector. Each new API is a potential leak. By opening the door to seven different firms, the Pentagon has multiplied its surface area for cyber espionage sevenfold.

The False Promise of "Safety"

Many of these new contracts are being sold under the guise of "AI safety." The narrative is that by not relying solely on Anthropic—a company famous for its constitutional AI approach—the Pentagon can explore models with different ethical constraints.

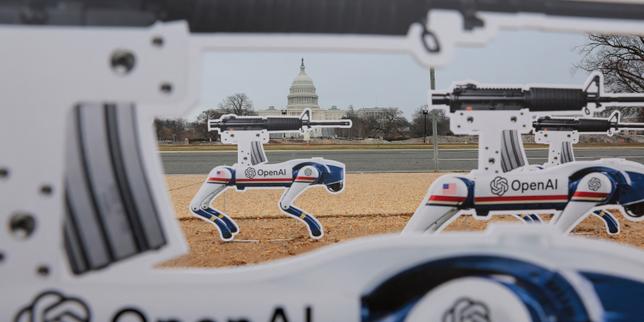

This is a polite way of saying they want models that are easier to weaponize.

But here is the hard truth: A "safe" model is often a less capable model. If you strip away the guardrails to make an AI more aggressive in tactical analysis, you also make it more prone to "hallucinations." In a civilian chatbot, a hallucination is a funny mistake. In a command-and-control system, a hallucination is a war crime.

The Pentagon is trying to have it both ways. They want the speed of a startup and the reliability of a defense prime like Lockheed Martin. You cannot have both.

The "Middleman" Tax

I have seen the DoD blow millions on "innovation hubs" that do nothing but produce slide decks. These seven contracts feel like more of the same.

These firms are not providing a unique service. They are providing access. They are middlemen between the raw power of foundational models and the specific needs of the military.

Imagine a scenario where a frontline commander needs a real-time translation of intercepted signals. Does he care that the AI was sourced from a "diverse ecosystem of vendors"? No. He cares about latency. He cares about accuracy.

By the time the data passes through the security layers of seven different contractors, the intelligence will be stale. The bureaucracy of managing seven contracts will move slower than the technology itself.

Why the "Fail Fast" Mentality Fails in Defense

The Silicon Valley ethos of "move fast and break things" works for photo-sharing apps. It does not work for the nuclear triad.

The Pentagon is currently obsessed with "commercial off-the-shelf" (COTS) AI. They think they can just plug in the latest LLM and it will magically solve logistics. This ignores the "alignment problem." These models were trained on the internet. They were trained on Reddit threads and Wikipedia. They were not trained on classified doctrine or the nuances of electronic warfare.

No amount of signing ceremonies with Anthropic's rivals will fix that.

The Reality of the AI Arms Race

We are in an arms race with adversaries who do not have to worry about vendor diversity or "fair" contracting. They are picking one direction and sprinting.

The Pentagon is trying to run seven different races at once.

If you want to win the AI war, you don't sign seven contracts. You pick the best tech, you give them the most compute, and you iterate until it works. Everything else is just expensive noise.

The DoD is currently paying for the noise.

Stop treating AI like a luxury office supply. It is a weapon system. Treat it with the same singular focus you gave the Manhattan Project.

Diversity is a strength in a workforce; in a mission-critical tech stack, it is a liability.

Pick a lane. Build the compute. Stop the press releases.