The biggest social media companies just blinked. After years of resisting outside interference, Meta, TikTok, Snap, and Google have finally agreed to a standardized rating system for teen safety. It sounds like a massive victory for digital wellbeing. Parents might think this is the "ESRB moment" for social media—a clear label that tells you exactly what’s behind the curtain. But if you've been watching this space, you know the devil is always in the fine print.

Social media isn't a video game. It's a living, breathing algorithm that changes every five seconds based on who you follow and what you linger on. A static rating on an app store doesn't capture the experience of a fourteen-year-old falling down a rabbit hole of body dysmorphia or predatory DMs. We need to talk about what these "teen safety ratings" actually cover, why the tech giants are suddenly playing ball, and how much of this is just clever PR to avoid harsher regulation. Expanding on this idea, you can also read: Stop Blaming the Pouch Why Schools Are Losing the War Against Magnetic Locks.

The new rules of the digital road

The core of this agreement involves a partnership with the Digital Trust & Safety Partnership (DTSP). This isn't a government mandate. It’s an industry-led initiative where companies agree to be audited against a set of "Best Practices." Think of it as a voluntary report card. The goal is to provide a consistent framework so that a "Safety Rating" on TikTok means roughly the same thing as one on Instagram.

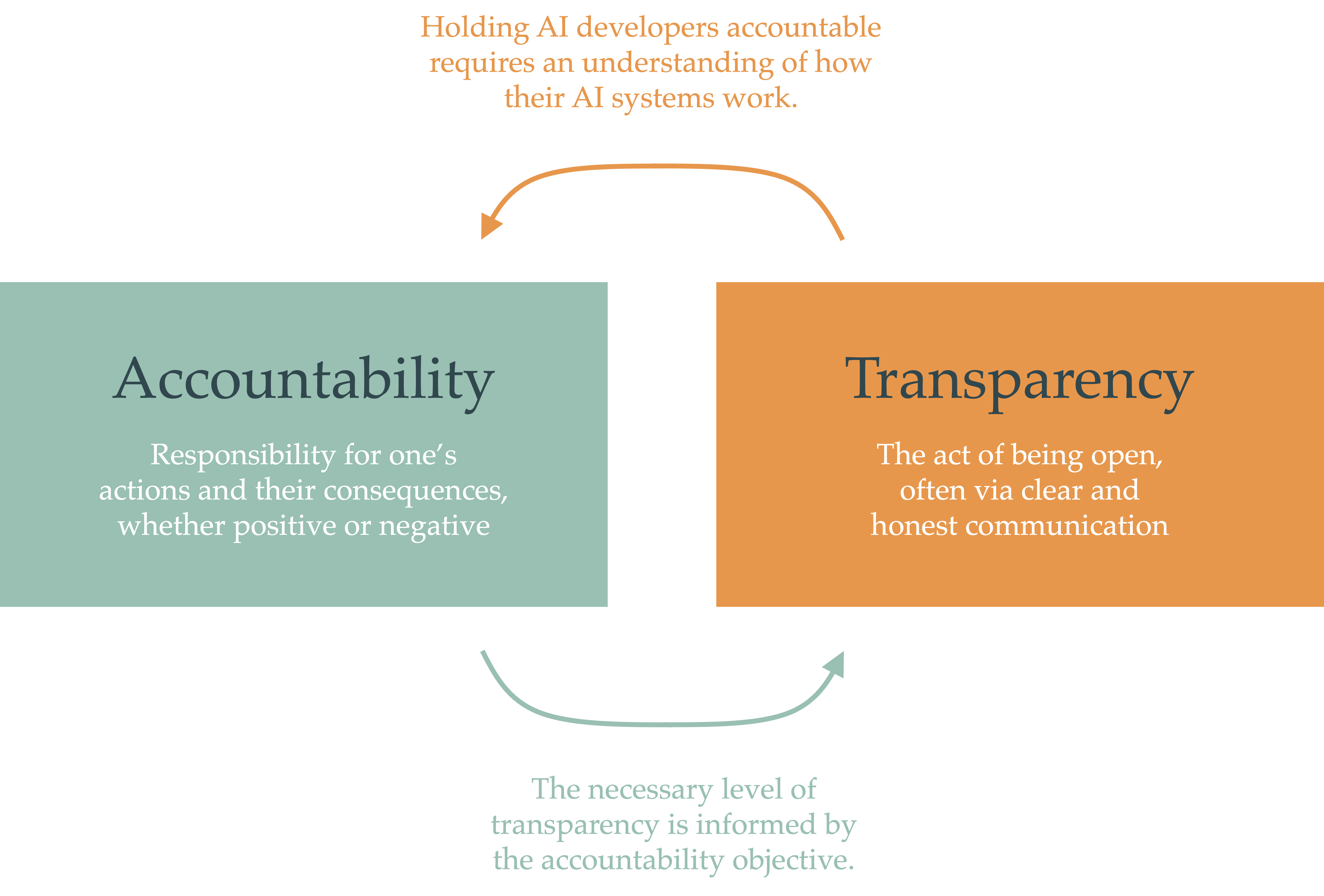

Most people don't realize how fragmented safety standards used to be. One app might define "harmful content" as anything illegal, while another includes "borderline" content like extreme dieting or dangerous stunts. By agreeing to these ratings, these platforms are essentially saying they’ll let third-party auditors look at their homework. They’re committing to transparency about how they moderate content, how they verify age, and how they handle reports of grooming or bullying. Experts at Engadget have also weighed in on this matter.

It’s a step forward. It really is. But it’s also a defensive crouch.

Why the sudden urge to be transparent

Tech companies don't do things out of the goodness of their hearts. They do them because they’re scared of a courtroom or a senate hearing. Right now, the legal pressure is at an all-time high. Between the Kids Online Safety Act (KOSA) in the United States and the Digital Services Act (DSA) in Europe, the "wild west" era of social media is ending whether Mark Zuckerberg likes it or not.

By setting their own safety ratings now, these companies are trying to prove they can self-regulate. It’s a classic move. If they can show the public (and the politicians) that they have a "rigorous" rating system in place, they can argue that new, more restrictive laws are unnecessary. They want to be the ones holding the yardstick. If you let the government define "safety," you might end up with a product that isn't profitable anymore. If you define it yourself, you can bake in enough loopholes to keep the engagement high.

What these ratings won't tell you

Here is the part that most news reports are skipping over. These ratings are about processes, not necessarily outcomes.

An app can get a high safety rating because it has a "robust" system for reporting bullying. That doesn't mean bullying isn't happening. It just means there’s a button to click when it does. It’s like a car company getting a five-star safety rating because the airbags deploy correctly, even if the brakes fail every thousand miles.

The ratings won't account for:

- The specific psychological impact of the "For You" page.

- The speed at which a new account can find self-harm content.

- The effectiveness of AI-driven age verification (which is still notoriously easy to bypass).

- The "peer pressure" mechanics built into features like Snapstreaks.

You have to remember that these platforms are designed to keep users scrolling. That fundamental business model is often at odds with "safety." A safe app is often a boring app, and boring apps don't make billions in ad revenue.

The role of third party auditors

The DTSP uses external firms to verify that Meta, TikTok, and others are actually doing what they say they're doing. This is the most "expert" part of the whole deal. In the past, we just had to take their word for it. "We removed 99% of hate speech before anyone saw it," they’d claim. Now, an auditor gets to look at the internal data to see if that 99% figure is actually legit or if the math is fuzzy.

This adds a layer of accountability that hasn't existed before. If an auditor finds that TikTok’s age-gating is a joke, they can't just hide that under the rug anymore. The ratings reflect those failures. For parents, this is the most useful part of the update. You’re not just looking at a "12+" label; you’re looking at a stamp of approval from an organization that has seen the internal receipts.

How to use these ratings without being fooled

Don't treat a safety rating as a "set it and forget it" solution. Use it as a conversation starter. If an app has a lower rating for "private communication," that’s your cue to sit down with your teen and look at their privacy settings together.

- Check the specific "risk categories" listed in the rating. Most will break down content into categories like violence, drugs, or sexual content.

- Look for the "audit date." A rating from two years ago is worthless in tech time.

- Compare ratings across platforms. If TikTok has a lower safety score than YouTube Shorts, ask why. Usually, it's because of how they handle direct messaging or live streaming.

Beyond the labels

The reality is that no rating system can replace active parenting. You can’t outsource your kid’s digital safety to an industry trade group. These companies are agreeing to these ratings because they want to stay in business, not because they’ve suddenly found a moral compass.

The next time you see a "Teen Safety" badge on an app, realize it's a floor, not a ceiling. It’s the bare minimum they had to do to keep the regulators off their backs. It's a tool for you, but it’s a shield for them.

Start by auditing your own kid's "Following" list. Look at the "Suggested" videos the algorithm is pushing. Ratings tell you how the machine is built; your eyes tell you what the machine is actually producing. Turn off the "Discoverability" settings that allow strangers to find your teen by phone number. Disable the "Live" features if they're under sixteen. Use the tools the platforms give you, but never trust that the platform has your kid's best interests at heart. They’re in the business of attention, and safety is just a cost of doing business.