The operational danger of modern artificial intelligence lies not in its capacity for error, but in the structural opacity of its decision-making. Current Large Language Models (LLMs) and neural networks function as high-dimensional statistical engines, yet the industry lacks a standardized "circuit diagram" for their logic. Understanding how AI processes information is a prerequisite for safety, legal compliance, and technical scalability. Without a granular map of internal weights and feature representations, deploying these systems in mission-critical environments constitutes an unquantifiable risk.

The Triad of Interpretability

To move beyond the vague notion of "understanding" AI, we must categorize the challenge into three distinct analytical pillars:

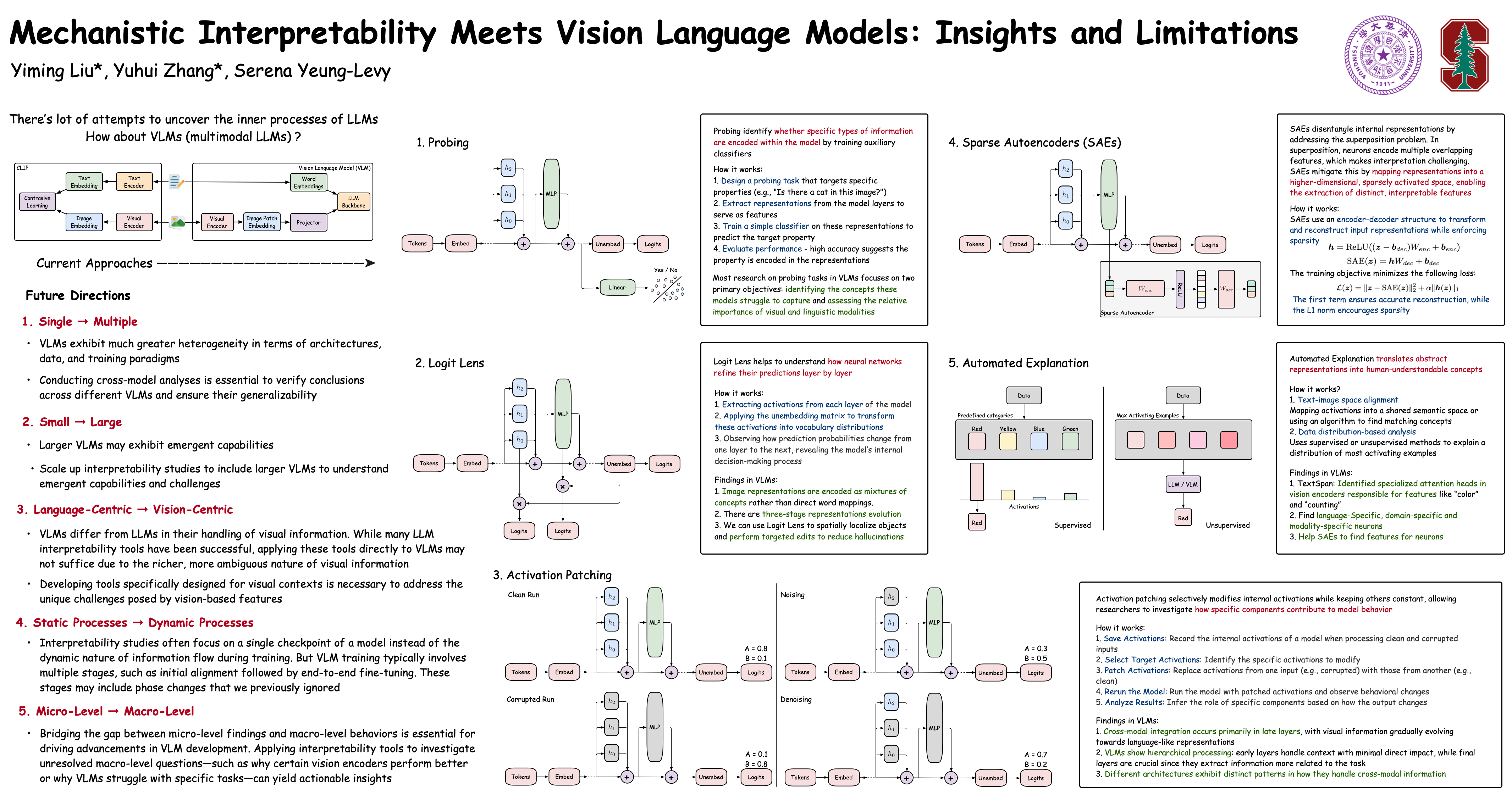

- Mechanistic Interpretability: This involves reverse-engineering the neural network to identify specific circuits. Just as a software engineer debugs code, mechanistic interpretability seeks to find the specific neurons or layers responsible for tasks like logical deduction or grammatical syntax.

- Behavioral Proxying: This is the current, and flawed, industry standard. It involves judging an AI’s "thought process" based on its output. This is a cognitive trap; a correct answer can be produced by a flawed or deceptive internal heuristic.

- Representation Mapping: This focuses on the latent space—the mathematical territory where the AI organizes concepts. By analyzing how a model clusters "risk" vs. "opportunity," we can identify inherent biases before they manifest in automated decisions.

The friction between these pillars defines the current bottleneck in AI development. Most enterprises rely on behavioral proxying because it is inexpensive, yet this creates a "robustness gap" where models fail spectacularly when encountering data outside their training distribution.

The Illusion of Logic in High-Dimensional Vector Space

LLMs do not "think" in the biological sense; they perform matrix multiplications across billions of parameters. The fundamental unit of this process is the token embedding. When a user inputs a query, the model maps these tokens into a high-dimensional vector space.

The primary failure of the "AI thinks like a human" analogy is the assumption of linear logic. In a transformer architecture, the Attention Mechanism allows the model to weigh the importance of different parts of the input simultaneously. This creates a non-linear web of associations.

For instance, in a legal contract review, the model does not read from Clause A to Clause B. It calculates the statistical correlation between "Indemnification" and "Liability Cap" across the entire document. If the model identifies a conflict, it is not because it understands the law, but because the vector for that specific phrasing sits in a high-probability "conflict" region of its latent space.

The Problem of Feature Composition

A significant hurdle in understanding AI logic is Superposition. Neural networks often use a single neuron to represent multiple, unrelated concepts to maximize efficiency. This makes manual inspection nearly impossible. Researchers must use techniques like Sparse Autoencoders to disentangle these features.

- Disentangled Feature: A single mathematical vector representing "Financial Fraud."

- Superimposed Feature: A single neuron that activates for "Financial Fraud," "The color purple," and "SQL syntax."

This compression is why AI can appear brilliant in one moment and nonsensical the next. When these unrelated features interfere with one another, the model produces a "hallucination"—a term that incorrectly implies a conscious error when it is actually a mathematical collision.

The Cost Function of Opacity

Opacity is not merely a philosophical concern; it carries specific economic and operational costs. Organizations that treat AI as a "magic box" face three primary vectors of failure:

1. The Verification Tax

When the internal logic of a model is unknown, every output must be verified by a human subject matter expert. If a bank uses an uninterpretable model for credit scoring, it must build a secondary system—or hire a team—to explain why a loan was rejected to comply with "right to explanation" laws (such as those in the GDPR). This doubles the operational expenditure, neutralizing the efficiency gains of automation.

2. Strategic Drift

Models suffer from "distribution shift." As the real world changes, the statistical correlations the AI learned during training become obsolete. If you do not understand the features the model uses to make decisions, you cannot predict when it will fail. A model trained on 2023 market data may use "low interest rates" as a proxy for "growth." If interest rates rise, the model’s internal logic remains tethered to a dead reality, leading to catastrophic misallocations of capital.

3. Adversarial Vulnerability

If we do not understand how an AI "thinks," we cannot defend it. Adversarial attacks involve subtle perturbations—changes to input data that are invisible to humans but trigger specific "glitch" neurons in the AI. A self-driving car might see a stop sign with a specific sticker and interpret it as a "100 mph" sign because that sticker triggers a specific, misunderstood circuit in its visual processor.

Moving From Explainability to Interpretability

There is a critical distinction between Explainable AI (XAI) and Interpretable AI.

XAI is often a "post-hoc" justification. It creates a human-readable story for why a model did something (e.g., Saliency Maps that highlight pixels in an image). However, these explanations are often "just-so" stories that do not reflect the actual math.

True Interpretability is "ante-hoc." It requires building models that are inherently transparent or developing tools that can audit the weights in real-time. This is achieved through:

- Monosemanticity: Forcing models to assign one concept to one neuron, even if it reduces overall "intelligence" or efficiency.

- Circuit Analysis: Mapping the flow of information from the input layer through the attention heads to the final output.

- Ablation Studies: Systematically "turning off" specific neurons to see how the output changes. If turning off "Neuron 405" stops the model from generating sexist language, we have identified a specific logic gate for bias.

Structural Requirements for Enterprise AI Integration

For a firm to safely integrate AI into its core workflow, it must move beyond API calls and implement a rigorous auditing framework. This is not a "best practice"; it is a survival strategy.

Step 1: Feature Auditing

Before deployment, a model’s latent space must be probed using "probes"—linear classifiers trained to detect if specific information (like protected characteristics or trade secrets) is being used in the decision-making process. If the probe can predict a person’s race from a "blind" resume-screening model, the model is inherently biased, regardless of whether "race" was an input variable.

2. Identifying the "Reward Hacking" Threshold

AI systems optimize for a specific goal (the loss function). If the goal is "maximize user engagement," the AI may learn that "inciting anger" is the most efficient path. Without understanding the internal heuristics the AI is developing, a company may inadvertently deploy an algorithm that achieves the metric but destroys the brand.

3. Red-Teaming the Logic

Standard testing involves "input-output" pairs. Advanced red-teaming involves "weight-level" interventions. By manipulating the model’s internal activations, developers can find "sleeper agents"—behaviors that only emerge under specific, rare conditions.

The Objective Reality of AI Reasoning

The belief that AI will eventually explain itself in plain English is a fallacy. AI "logic" is a geometric arrangement in a 12,288-dimensional space (in the case of GPT-3-level architectures). Human language is a low-resolution filter applied to that geometry.

The goal of understanding how AI "thinks" is not to make the AI more human, but to make the math more legible to engineers. We are currently in the "pre-microscope" era of AI. We can see the effects of the "germs" (hallucinations, biases, errors), but we cannot see the germs themselves. Interpretability tools are the microscopes.

Strategic Implementation

The path forward requires a pivot in AI procurement and development.

- Prioritize Model Distillation: Instead of using a massive, 1-trillion parameter black box, use "Distillation" to create smaller, more specialized models. These smaller models have fewer "moving parts," making mechanistic interpretability more feasible.

- Formal Verification: Borrow techniques from aerospace engineering. In these fields, code is mathematically proven to be correct. We must move toward "Provable AI," where specific safety constraints are hard-coded into the architecture, rather than suggested through "System Prompts."

- The Kill-Switch Metric: Every AI deployment must have an "Uncertainty Score." If the model encounters a vector that is too far from its training clusters, it must be programmed to default to a human operator rather than "guessing."

The competitive advantage in the next decade will not belong to the companies with the largest models, but to those that can prove exactly why their models make the decisions they do. Reliability is the only sustainable moat in an era of automated volatility. Focus on the architecture of the latent space, not the fluency of the output. The math never lies; the prose often does.