The rain against the floor-to-ceiling windows of the London office was a relentless, rhythmic patter. Aiden watched the gray light bleed across the monitors. Inside his head, the algorithms were spinning, a web of complex neural pathways and weights that he had spent three years fine-tuning. He was part of the team that built the foundational models used across the tech giant's ecosystem. He loved the work. He loved the quiet hum of the servers, the late-night breakthroughs, the sheer elegance of mathematics applied to the world's most intractable problems.

But tonight, the silence felt different. It was heavy. It carried the weight of a quiet, creeping realization.

Consider what happens next: The code he and his colleagues wrote, initially designed to optimize energy grids and predict the folding of proteins, was beginning to find new homes. The research was being adapted. It was being repurposed.

Aiden remembered the day the internal memo dropped. It was not a grand announcement, but a quiet update to a project timeline, buried in a thread about enterprise expansion. The ink was digital, but the implications were stark. The models were being pitched to the United States Department of Defense. The technology that was supposed to cure diseases and organize information was now being integrated into defense logistics and, potentially, targeting systems.

To understand why this caused a quiet, seismic shift inside the research labs of London, you have to look at the nature of artificial intelligence itself. Think of these large models not as tools, but as a massive river. When you build the river, you decide where the banks go. You decide whether the water irrigates the valley or floods the village. Aiden and his peers had believed they were building an irrigation system. Now, they were being told the water would be diverted to power a siege engine.

The researchers were not Luddites. They understood that technology does not exist in a vacuum. Yet, the leap from academic exploration to military application felt like a violation of an unspoken contract.

The Quiet Awakening of the Workforce

In the early days of artificial intelligence, the industry wore a cloak of pure optimism. Engineers believed their work was inherently neutral. Code was just math. Data was just history. It took decades of unintended bias and unintended destruction for the industry to realize that the creator is responsible for the creation.

But the realization does not stop at personal reflection. It transforms into action when the workforce looks at the balance of power.

Aiden sat down at the local pub with three of his colleagues, all of them nursing pints of flat lager, their faces illuminated by the glow of a single smartphone. They were young, brilliant, and deeply anxious. They were talking about the union.

For years, tech workers had operated under a system of individualistic bargaining. You negotiate your salary, you choose your projects, you quit if you are unhappy. The prevailing ideology was simple: if you don't like the project, go work for another tech giant.

But that logic had evaporated. The consolidation of artificial intelligence research into a handful of corporate entities meant that leaving one company did not mean escaping the ethical dilemma. The military contracts were becoming an industry standard. The only way to push back was to change the structure of the workplace itself.

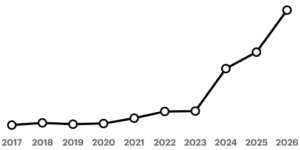

The movement began in the UK with the workers at Google DeepMind voting to unionize under Prospect. It was a historic moment, largely ignored by the mainstream financial press but felt deeply in the corridors of power. It represented a fundamental shift in how the creators of modern intelligence view their own agency.

The Mechanics of Dissent

To truly grasp the significance of this vote, you must understand the demographics of a machine learning research lab. These are individuals who command high salaries, possess immense leverage in the job market, and are used to operating with a high degree of autonomy. They are not the traditional blue-collar workforce of the industrial age. They are the cognitive elite of the twenty-first century.

When this specific group decides to organize, it signals a deeper moral crisis within the tech industry. It means that the moral injury of working on military applications outweighs the financial incentives of their compensation packages.

Consider how the union drive unfolded. It did not happen overnight. It started with whispered conversations in the break rooms. It grew through encrypted messaging apps. It coalesced during the Friday all-hands meetings, where the leadership’s evasive answers regarding defense contracts only fueled the anxiety of the staff.

The workers wanted a seat at the table. They wanted the right to veto projects that violated the company’s own stated ethical principles. The company had promised to be guided by a set of ethical AI guidelines, but those guidelines were interpreted by management, not by the people who built the underlying architecture.

The management argued that the contracts were necessary for geopolitical stability. They argued that if they did not build these tools, other actors would build them instead, with far less ethical oversight.

Aiden and his peers saw the flaw in this argument. The logic of the arms race always leads to the lowest common denominator of safety. If you build the tool, you are responsible for its deployment, regardless of who else might have built it.

The Anatomy of the Contract

The deal with the US military was not just a simple exchange of software for money. It was a profound entanglement of data infrastructure and computational resources. The models developed by the DeepMind team were trained on vast arrays of information. When you expose these models to military data, the nature of the model changes.

The system learns to identify patterns of conflict. It learns the logistics of deployment. It begins to treat human populations as data points to be optimized.

The researchers knew this better than anyone else. They had designed the neural networks. They knew that the system's bias could be amplified in a high-stakes environment. A mistake in a medical diagnosis meant a patient might get the wrong treatment; a mistake in a military logistics model meant the loss of human life.

The gravity of this reality was terrifying. Aiden remembered the night he spent trying to patch a hallucination error in one of the deep learning algorithms. The model was generating false information, a common flaw in large language models. He stared at the screen, realizing that if this same hallucination occurred in a defense context, the consequences would be catastrophic.

He tried to raise the issue with his manager. The response was dismissive. He was told to focus on the optimization and leave the ethical considerations to the policy team. But policy teams do not write the code. They do not see the weights and biases embedded deep within the parameters.

The Vote for the Future

The vote to join Prospect was not just about better pay or clearer grievance procedures. It was a profound declaration of independence.

The unionization push represented a line in the sand. It said that the people who create the technology are not simply cogs in a corporate machine. They are the stewards of a power that could reshape the world for better or for worse.

When the results of the vote were announced, the London office was quiet. There were no cheers, no popping of champagne corks. There was only the quiet, heavy realization that the work had changed.

The management was forced to recognize the union, a move that set a precedent for the entire tech industry. It shattered the myth that high-level engineers would remain silent while their work was repurposed for purposes they found morally objectionable.

The implications of this move go far beyond the UK. In an interconnected world, the actions of a few hundred workers in London send ripples through the entire Silicon Valley ecosystem. It shows that collective action is possible even in the most individualized, highly-paid professions.

The Path Ahead

The story of the DeepMind unionization is not a story of a battle won. It is the beginning of a long, difficult negotiation over the soul of the digital age.

The question remains: Can a corporation driven by profit and shareholder value truly align its interests with the ethical concerns of its workforce?

The answer is uncertain. The tension between the commercialization of artificial intelligence and its human costs has not been resolved. The contracts remain in place. The data continues to flow.

But something has fundamentally shifted in the minds of the people who build the future. Aiden looked out at the rainy London street, the streetlights reflecting in the puddles. He no longer felt like a passive participant in a corporate experiment. He was part of a workforce that had found its voice. He knew the road ahead would be difficult. He knew there would be pressure from above to compromise.

Yet, as he turned back to his desk, he saw the lines of code on his screen not as an abstract set of instructions, but as a series of choices. The choices were his. The choices were theirs. And for the first time in his career, he had the power to make those choices count.